Apache Kafka is an open source platform for capturing, ingesting and streaming large amounts of events. The distributed nature of this technology enables it to deliver data in near real time. Its scalability makes it an excellent choice for enterprises looking to implement event-driven integrations. In addition to being highly configurable, Apache Kafka is free to use for non-commercial purposes. To get started with Apache Kafka, download its free trial.

When used in clusters, Apache Kafka uses a distributed architecture to distribute topic log partitions across brokers and cluster nodes. This allows for increased scalability and performance by allowing for additional consumers to access topic log partitions. In addition to this, Apache Kafka supports multiple clusters, allowing for greater failover and increased processing speed. For more information, see How to Use Apache Kafka

Kafka is an open source data-streaming platform that has four key APIs: Consumer, Producer, Streams, and Connector. The Producer API enables applications to publish and consume streams of records. The Consumer API enables applications to subscribe to Kafka topics and process them. This allows data to be retrieved and processed across multiple applications. With the Consumer API, applications can easily manage the stream of data without having to wait for the full data stream.

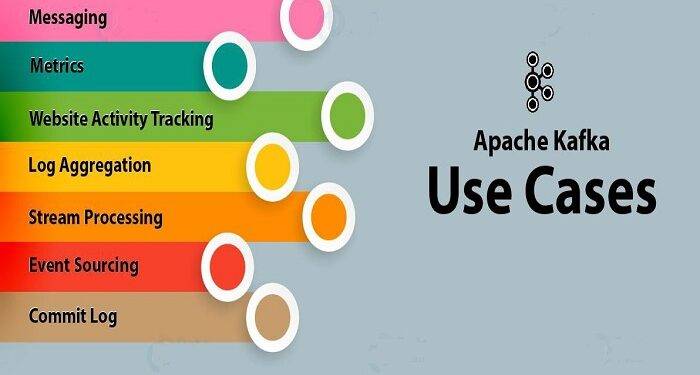

A few limitations of Apache Kafka make it an excellent choice for many businesses and organizations. While it doesn’t support request/reply or point-to-point queues, it does support publish/subscribe messaging and is an excellent replacement for traditional message brokers. Kafka can handle large volumes of data and is also ideal for many large-scale message processing applications. If you’re looking to implement a distributed streaming platform, Apache Kafka is the way to go.

Consumers read and write data from the Kafka cluster. When a consumer is ready to receive a message, it pulls it from the broker and publishes it to a topic. Producers publish messages to one or more topics and use a partition-based model for further scalability. The consumer group is composed of a producer and a consumer group. A producer group can run many processes at once while consumers process one partition at a time.

Both Kafka and Kinesis are distributed systems. They are made up of clients and servers that communicate using high-performance TCP network protocol. Both can be deployed on bare metal hardware, virtual machines, containers, or in the cloud. The open source nature of Kafka means that you have more flexibility when it comes to production and scaling. The platform can also be self-managed, allowing you to decide how big a Kafka cluster needs to be.